Social Intelligence

API for AI products

Build AI products that respond to how people communicate, not just what they say.

An omni-modal model purpose-built for social intelligence.

Detects 12 social signals from video, audio, and text — processed together, in temporal alignment.

Send a video. Get back detected signals, evidence-grounded rationales, and confidence scores your application can act on.

Add social intelligence to any application.

Yeah,soIthinkthemainissuewas

communication.

Wejustweren'tonthesamepage.

Canyoutellmemoreaboutthat?

Like,Iwouldsaysomething

andshe'dhearsomethingcompletely

different.

Itwasfrustrating.

Howdidthatmakeyoufeel?

Honestly,prettyhelpless.

Iwantedtofixitbut

Ididn'tknowhow.

Thatsoundsreallydifficult.

Itwas.Butlatelythingshave

beengettingbetter.

We'vebeentryingtoactuallylisten.

That'sgreattohear.

Whatchanged?

Ithinkwebothjustgottired

ofthesamepatterns.

Somethinghadtogive.

Upright posture, steady eye contact, and repeated nodding throughout response

Finally more data than a transcript

Transcripts capture what was said. Inter-1 captures how — voice, face, and body language processed from a single video stream. Build products that act on what's actually happening in a conversation.

Built for agents and humans

Give agents the ability to detect and respond to social signals. Or give users structured feedback on how they communicate — backed by evidence, not opinion.

See everything. Explain

every signal.

Multimodal perception

A furrowed brow could be focus. Add a vocal pitch shift and tense posture — that's frustration. Inter-1 analyses all three modalities together.

12 actionable social signals

Human conversation runs on signals no transcript captures. Inter-1 detects 12 of them simultaneously, across modalities.

{"type": "uncertainty","start": 30.0,"end": 40.0,"probability": "high","rationale":"The speaker begins with 'I don't know,' directly indicating a lack of knowledge, accompanied by furrowed brows and raised palms gesturing outward."}

Explains what triggered every signal

Every signal comes with the observable cues that triggered it. Know why the model returned uncertainty in a nicely formatted JSON.

Built on Behavioural Science, validated with psychologists

Inter-1 detects 12 signals rooted in behavioral science. We're working together with psychologists to ensure each signal reflects patterns that are both scientifically validated and practically meaningful in real-world conversations.

What developers are building

with the Signals API

Customer stories

View all customer stories

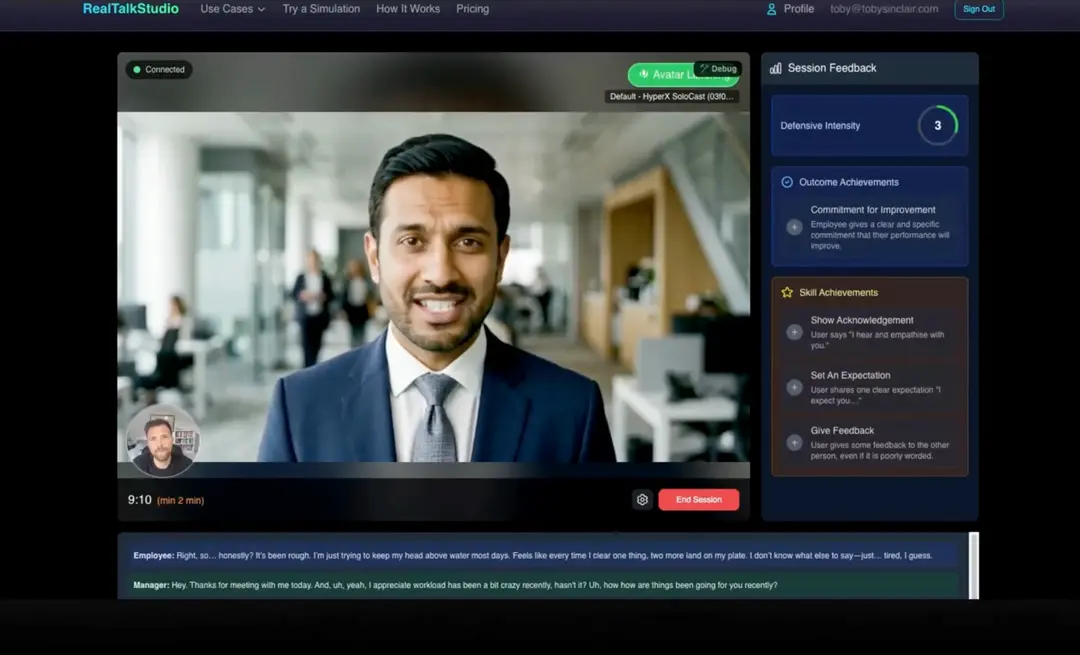

How Real Talk Studio Builds Behavioural Evidence That Goes Beyond Words

Adding Non-Verbal Intelligence to AI Roleplay and Communication Training

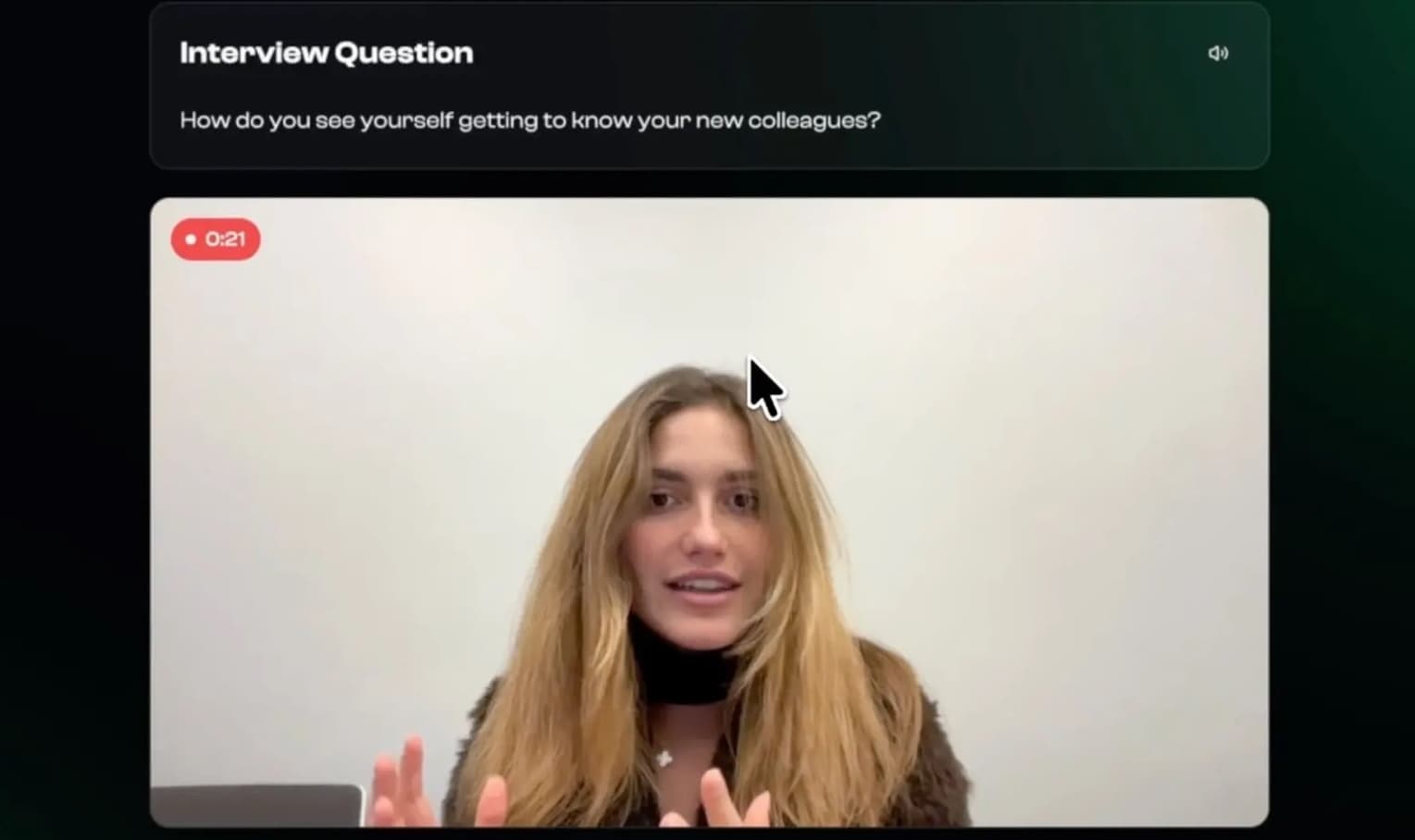

Adding Behavioral Intelligence to AI Mock Interviews

Non-verbal coaching that makes mock interviews feel real

Transforming Parent Counselling with Socially-Aware AI

AI coaching that responds to how parents speak, not just what they say